Stop, Ask, Wait

Part 2: A three-step system that uses context clues to identify AI-manipulated media

Two weeks ago, a friend showed me a TikTok video of a crowded airport in Israel. People were waiting in long lines, suitcases piled high on baggage carts. The text was a snarky joke; this was right after the news broke that Iran had fired hundreds of drones and missiles into the country, and the video seemed designed to make you think that Israelis were fleeing in droves.

“Is that even Israel?” I asked him. There was no identifiable information in the background, like a sign with the airport name. The people weren’t identifiably Israeli. It could have been an airport virtually anywhere in the world, if not for the superimposed text implying that it was Israel.

“Good point,” he said, and then we moved on.

This is one easy way people get fooled by misinformation—a passive, mindless consumption of something designed to mislead. It’s not, however, how people usually get fooled by AI and other forms of digital manipulation, like Photoshop.

It’s the opposite. Rather than everyday imagery like a busy airport, people tend to engage with digitally manipulated content because it incites them: the image is funny, surprising, or outrageous—satisfying in some way. It also tends to play on known context.

This explains two of the most well-known AI images to go viral, Pope Francis in his “Balenciaga” puffer jacket and former President Donald Trump being arrested. To this day, thinking about the Pope waking up one day and deciding to be a fashion plate makes me laugh. It went viral because it’s funny, and it’s funny because of how it plays on what we know about the Pope. You would never expect to find a (presumably) deeply spiritual man in such an outfit:

If you follow my steps for identifying AI-manipulated media, you’ll be able to easily tell that this photo is AI. The strange way the Pope is holding the—coffee cup? “Pope Salts”? tiny drum?—and the missing side of the chain the crucifix is magically hanging on are two blaring “it’s fake!” sirens.

But let’s say you didn’t spot anything wrong with the photo. What would you do if you had encountered this photo in the wild? How would you know whether it’s real?

NPR suggests following standard media literacy practice. Mike Caulfield, a research scientist at the University of Washington’s Center for an Informed Public, created the four-step SIFT system outlined in the linked-to article, which is: 1) Stop, 2) Investigate the source, 3) Find better coverage, and 4) Trace claims, quotes, and media to the original context.

Which is a great system, but necessitates going through a decent amount of trouble to figure out if something is real or not. Most people simply don’t care that much.

I’d like to suggest that with AI imagery and with any digitally manipulated photo or video, we can simplify SIFT to the much less effort-intensive SAW: 1) Stop, 2) Ask, and 3) Wait.

Step One: Stop

Okay, stop. Seems simple enough, but how do you know when you should? You can make “stop” your go-to position while on social media—meaning, you don’t believe or share anything unless you have vetted it—and while I think that’s good media literacy hygiene, it’s likely pie in the sky that everyone will adopt that posture.

There is a second way, though, which I call the brain niggle. It uses what we know about manipulated imagery inciting emotion in some way and is a key indicator that what you’re looking at isn’t real.

When I was the managing editor of the print magazine Tricycle: The Buddhist Review, it was my job to collate multiple rounds of edits into a piece. Generally an article would go through a few rounds with the assigning editor, a round with the editor-in-chief, two rounds with the copy editor/fact checker, and a final round with the proofreader. After doing this for a certain number of magazine editions, I learned that errors had a habit of sneaking into the print product when I ignored a “brain niggle.” I would see something in the text, there was a niggle in the back of my brain telling me something was off, and I ignored it or rationalized it away.

I couldn’t have told you, every time, exactly what the niggle was reacting to, but there the reaction was. If I followed it up, I would see that a certain edit hadn’t been included, or something wasn’t in line with our style guide, or any number of things that needed to be fixed.

The same thing happens when we see AI or any digitally manipulated content on social media. It might not be a brain niggle, exactly, but it will be a reaction, internal or external. Sometimes it’s one of slight disbelief. Your brows furrow and your head tilts just slightly. What am I looking at? Maybe it’s one of satisfied delight. Your eyes widen or your mouth curves with the deliciousness of seeing Trump being aggressively taken into custody. Maybe it’s one of shock—whoa, that’s crazy, you think. Or maybe you feel annoyed or angry, and heat rises in your chest.

Any one of those reactions is a good sign that it’s time to stop. Just by doing that, you have introduced enough friction to interrupt the usual “see-like-share” chain of social media. And you’ve “turned on” your mind enough to move on to step two.

Step Two: Ask

After step one, you could always follow through on the rest of SIFT. But let’s say you’re pressed for time or just feeling too lazy to go do a bunch of Googling.

If so, it’s time to move on to step two: Ask. Specifically, ask yourself context questions about what you’re looking at. They can be basic. Has this Pope, or any Pope, ever worn flashy clothes like this before? Is a leading religious figure likely to team up with a fashion brand? Would the Catholic Church, not exactly a bastion of modern thought, green-light such a get-up?

Asking context questions is exactly how research shows that humans do better than computer models at identifying real-world deepfakes. In one experiment, researchers found that the majority of participants could accurately identify whether a video of a well-known political figure like Vladimir Putin or Kim Jong-un was a deepfake or not by asking themselves questions like whether Putin speaks English or how likely he was to say a particular thing.

Of course, asking context questions leaves some room for error. This journalist was fooled by the Pope’s puffer photo by not knowing much about the Pope to begin with. (Although it’s also worth noting that the photo went viral in the very early days of AI-created imagery, when we were completely unused to how good the quality had gotten.)

Once you have stopped and asked yourself a couple of questions, you’ve likely now added a seed of doubt to the friction of “stop.” You may not know for sure if what you’re looking at is real, but you know that you might not know.

Step Three: Wait

This step is the easiest, because it requires doing nothing at all. While there is a lot of concern about AI and digital manipulation filtering misinformation and fake news through the public, there is surprisingly little said about correctives to it working in the same way. (Other forms of misinformation are more subtle and much harder to correct.)

By now, virtually everyone knows that the Pope and his puffer weren’t real. Same goes for the Trump photos, especially after his (very real) mug shot came out. Mainstream media, of course, will focus their attention on anything particularly novel or important; articles about both of our examples now abound.

Tracing our two examples back through social media leads to similar corrective mechanisms. Tweets about them have Community Notes, like the one below, appended. While the Community Note system isn’t perfect, the wisdom of crowds works pretty well on AI and will catch it over time:

On Facebook, it’s difficult to even find the Pope in his coat or Trump being taken into custody—most links from Google are broken. Presumably Meta took the photos down.

While you wait for the big corrective Internet gears to start grinding, you can also see what the professionals have to say about a certain image or video by checking directly. One good resource is Reuters’ Fact Check page. There is usually a lag between the time something is viral and when fact-checkers can get to it, but Reuters catches a lot of the big stuff.

SIFT, SAW, or even nothing, one silver lining to our AI-spattered media space is that despite all the concern about it, so far it has had surprisingly small real-world impact. Even if you’re in the tiny percent of the population who thinks the Pope once wore a really fashionable coat, does it matter?

“AI lowers the barriers to entry dramatically and allows more convincing and more creative uses of deception. But the result is just spam,” Carl Miller, a researcher with the Centre for the Analysis of Social Media at the British think tank Demos, told Politico in 2023.

An odd benefit of a society awash in distrust is that the public is also far from knee-jerk acceptance of AI imagery and video the likes of which would have significant repercussions. “There’s already a high level of skepticism among the public regarding earth-shattering images which have come from untrusted or unknown places,” Miller continued in the same article.

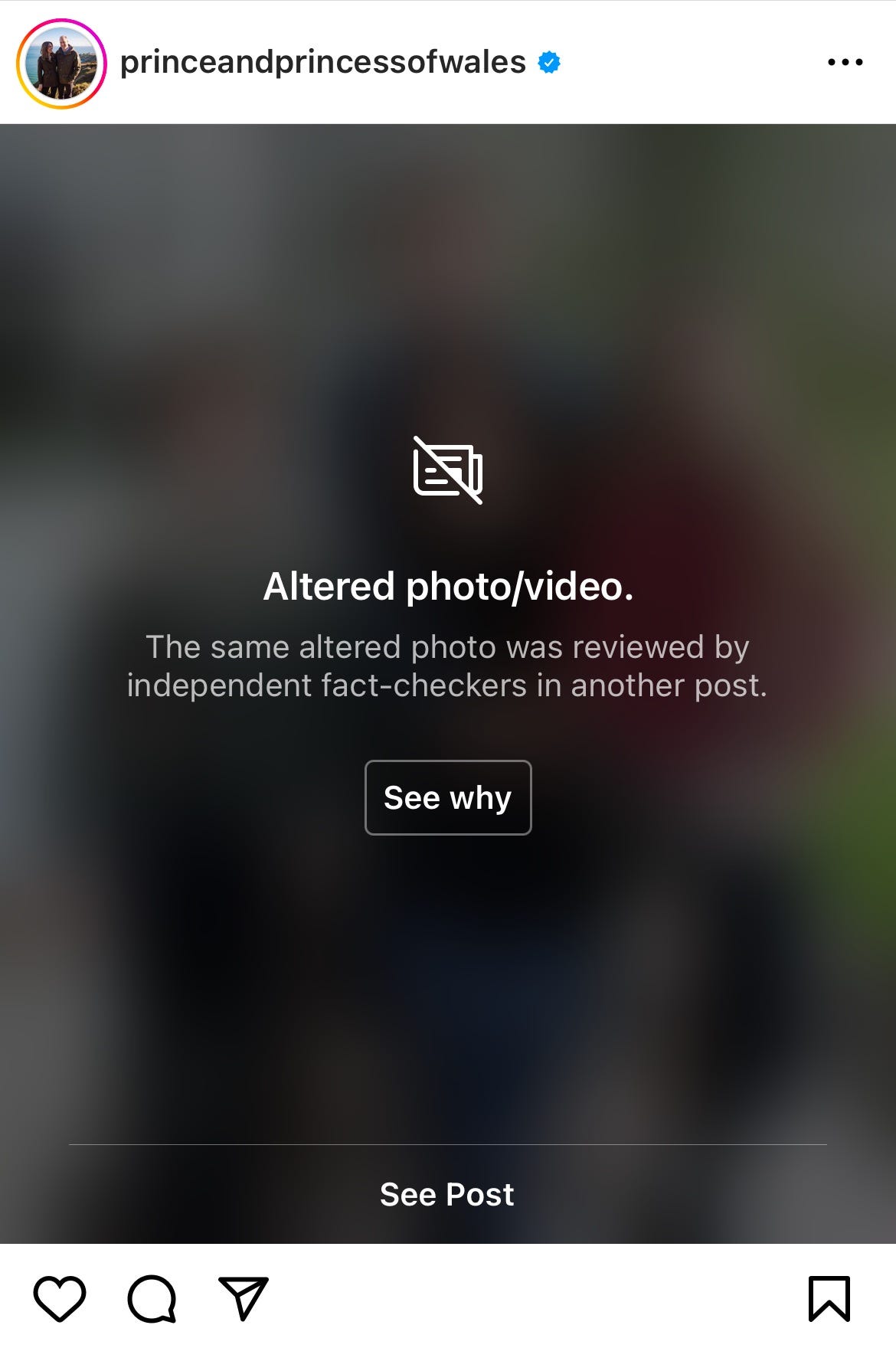

The infamous “Kate Middleton and family” photo is a perfect example of both of Miller’s points. How quickly did the online masses pick apart the bad Photoshop job of the British princess? It was their reaction that led to the major agencies like the Associated Press to issue a kill notice on the photo. On Instagram now, where the photo originally appeared, you can’t even see it without clicking through Instagram’s warning that it has been altered.

And while the conspiracy theorists are still running hot with silly stories about Kate, most of us now know that she is being treated for cancer.

So, the next time you see sharks on the Florida highway or Elon Musk selling you a get rich quick scheme, try SAW. If you have any thoughts, reactions, or ideas, please leave them in the comments!